Another uptime monitor

Uptime monitoring is availability monitoring. Websites and services, cannot live without it.

Why another uptime monitor website?

I have a ISPConfig3 server with many websites and want to know if these websites are up and running. Of course I started using available online uptime services. But how do these services actually work? Are they really telling me everything there is to know? Besides this, there is the issue of privacy. Nobody needs to know the uptime and downtime of the websites I am running and maintaining. That is when I started looking into this.

What is UP and DOWN

Some services tell you what is their definition of UP and DOWN. Most first access your website from the location of their data center. If this fails then they retry on the same node. If this fails then they try to access your website from remote locations. This means that if you use a US-based service, your website is accessed from the US. If you use a UK-based service, your website is accessed from the UK. Only in case of a failure, your website is accessed from other locations. Many services offer paid plans where you can specify the locations from which you want your website monitored.

The definition of UP and DOWN is not easy. What is a failure? Does a timeout error really mean there is something wrong with your website? How do we know? Is this a DNS error?

Testing many websites with Python

Can I build some kind of uptime monitor for my websites using Python? Of course you can, use AIOHTTP: Asynchronous HTTP Client/Server for asyncio and Python. With this package you can query many websites at (almost) the same moment. But asynchronous I/O can also introduce problems that are difficult to debug.

From tests to tasks

Once I got this working I was thinking that querying a website is just a task and that is why I decided to turn querying websites into tasks and write a task scheduler and task result processor.

Running tasks on remote machines: Message Queues

We also want to run tasks on other, on remote machines. Because the internet can be fast or slow, we add some buffering using message queues. I selected RabbitMQ, a popular message message broker. When we select tasks, we send them to our local task queue. We use a 'Shovel' to transfer our tasks to the RabbitMQ instance on our remote machine.

Running tasks on remote machines: Connection

To connect to our remote task executor we have several options. Docker Swarm seems a good choice. It creates a (secure) tunnel to a remote machine and that's it. Because we are already using RabbitMQ for our message queues, we also could use RabbitMQ-to-RabbitMQ (secure) connections over the internet.

Some performance calculations

When you talk about many users having many monitors, you are talking about a lot of data. The performance issues are mainly focussed in the areas:

- inserting test results

- selecting test result data for (auto-refresh) status pages

Assuming 18.000 users with 10 monitors (tests) each, running at an interval of one minute, we must insert 180.000 records per minute = 3.000 records per seconds. Mind-blowing! And this may only be the start ....

If we allow auto-refresh status pages, and every status page takes 100 msec to build, then we we can generate 600 status pages per minute, or 1200 status pages per two minutes. Not very much! We can (must!) improve this number for example by comparing the test result with the previous result.

Some size calculations

What amount of data are we talking about? Again, assuming 18.000 users with 10 monitors (tests) each, running at an interval of one minute, we are inserting 180.000 records per minute. In a day we have 259M records and in a week 1.814M records, or 2 billion records. And that is only for one week. With these amounts we know that the performance of our queries will soon get worse.

Some internet data transfer calculations

When we run a task on a remote node we have the task program at the remote node and must transfer the parameters of this task over the internet. And after the task has been run, the results must be send over the internet.

Again, assuming 18.000 users with 10 monitors (tests) each, running at an interval of one minute, we are running 180.000 tasks per minute. If the node is remote, and the total size of the parameters and result is 1000 bytes per task, then we are transfering 180Mbytes per minute over the internet. Absurd.

We can make a node smarter by repeating the same test over and over again, without resending the task parameters, but we still have some 100M of result data per minute. We could reduce this by using compression.

Partition by distributing users over new instances

In the above we saw that we can never manage all users with a single database. Fortunately, in our case, User-A has nothing to do with User-B. This means we can partition users over instances of our monitor application, where every instance has its own database.

Example. The first 500 users are assigned to our first instance, the next 500 users are assigned to the second instance, etc.

Now let's redo the calculations from above. Assuming 500 users with 10 monitors (tests) each, running at an interval of one minute, we must insert 5000 records per minute = 84 records per seconds. Reasonable!

In a day we have 7.200.000 records and in a week 50.400.000 records, or 50M records. Again reasonable.

The amount of data over the internet, 5.000.000 bytes, 5M. Again reasonable ... If we are dealing with slow connections we can (must) increase the interval time, for example from one minute to two minutes.

Selecting a database

Now it was time to select a database, or even multiple databases? I have webapps running with MySQL and PostgreSQL. But there are also time-series databases (TSDBs) that are optimized for storing and serving time series data. I looked into this and the thing is that when you select such a database, you have to learn a lot of new things, including how to create queries.

I looked at more detail into TimescaleDB, a time series database based on PostgreSQL. But you still must rearrange the layout of your test results table, called a hypertable. For exampe, there is no 'id'-field but a timestamp, that must be (made) unique, more or less.

I really want to use SQLAlchemy ORM and Core for all database access, and there people actually using TimescaleDB with SQLAlchemy. So in the end I decided to start with PostgreSQL. Maybe two times slower but no new learning curve here. If everything is running, I can look more into detail into time series databases. And either use TimescaleDB or have a separate time-series database for the test results.

To solve the problem of a huge database and slow queries, I decided to introduce an additional 'week'-table for the test results. Test results are not only inserted into the test results table but also into the 'week'-table. The 'week'-table only holds the data for the last week, older data is truncated. Now we have two inserts but we also have a 'week'-table with the same amount of data and thus predictable query response times.

Another problem we are facing is what to do when there are system failures. For example, consider that suddenly our test node does not respond anymore. In this case we immediately should stop sending tasks to this node, and resume only after the node is operational again. How do we know this node is down? One way is to check, before sending a new task, if the task actually did run, i.e. returned a result.

Adding an API

In the above I mentioned that supporting more users means spinning up more instances of our monitor application. This means our monitor application must a separate standalone micro-service. I already had some experience with FastAPI and thought that this would be a good choice to use FastAPI in this project.

Problem ... I did not want to repeat myself and first had to implement generic relationship operations, like one-to-one, one-to-many and many-to-many. This took some time but makes it some much more easy to add endpoints. I implemented the operations, which gave me API operations like:

- Crud: /monitors

- One-to-many: /monitors/<monitor_id>/tests

- Many-to-many: /status-pages/<status_page_id>/monitors

Once this was done I added new endpoints, for example to serve the data for status pages.

The frontend

You know it would be coming and once the API was up and running, there it was, I needed a frontend. This frontend should be able to connect to one of multiple monitor APIs. I looked at several options but finally settled for using my own build multi-language Python CMS. I build this using Flask almost three years ago, and use it as my Python blog. It certainly is not perfect but it also is not that bad. The main advantage is that it can be used to build multi-language websites. We always want the latest and the greatest meaning that all packages had to be updated to the most recent versions. Despair and headaches.

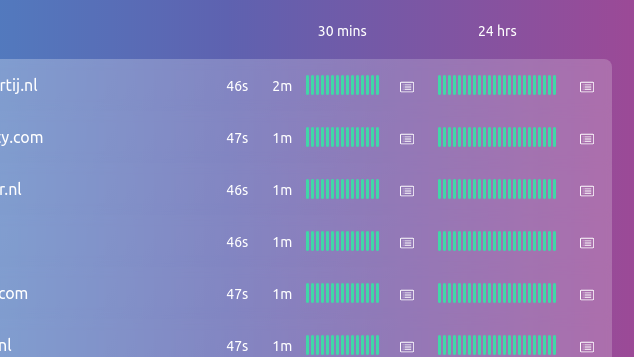

I created a new library to connect to the API and pages to manage the objects of the API: monitors, tests, status pages. For the moment all data is served to the user by the frontend. For example, a status page is generated by the monitor API. When users requests a status page, they connect to the frontend which passes the request to the API. The API generates the status page and the frontend returns this status page to the user. Later we can rearrange this.

July 2022

That is where I am now, July 2022. I have a working system with status 'beta', with only one API and one node. One problem at the moment is that I do not have a proper managable email sender. The API's are running on different instances and every instance should be able to send alert emails. SMS-messages, etc. I could use online services but prefer self hosting for privacy reasons.

Some words about privacy

When you have a webshop you probably do not care about using another online service. But in many cases you should care.

If not for yourself then care about your clients. Using an online service is another possible privacy breach. Nobody needs to know that you have websites, and nobody needs to know the uptime of these websites. Avoiding an online uptime service can be the same like avoiding Google Analytics and/or CDN (Content Delivery Network).

But Peter, now the data is with you? What will you do with my data? You are right. Of course I promise I will not use or sell your data ... :-) But if you really want to be sure then contact me and host the monitor application yourself.

Summary

This is very intense and complex project because there are many components involved. What I did not do was developing a single monolythic application but instead developing an application consisting of microservices. Al these microservices must be able to run on their own and need some kind of management. A lot of work waiting ...